Even Jeff Bezos has shared on his Twitter account the new update that achieves more accurate results than ever before.

Artificial intelligence has often faced humans in creative combat. It is capable of beating chess grandmasters, creating symphonies, writing poignant poems, and now creating detailed art from a brief prompt. The OpenAI team has recently created powerful software they have dubbed Image surpassing its predecessor Dall-E with the goal of revolutionizing the way we use AI with images.

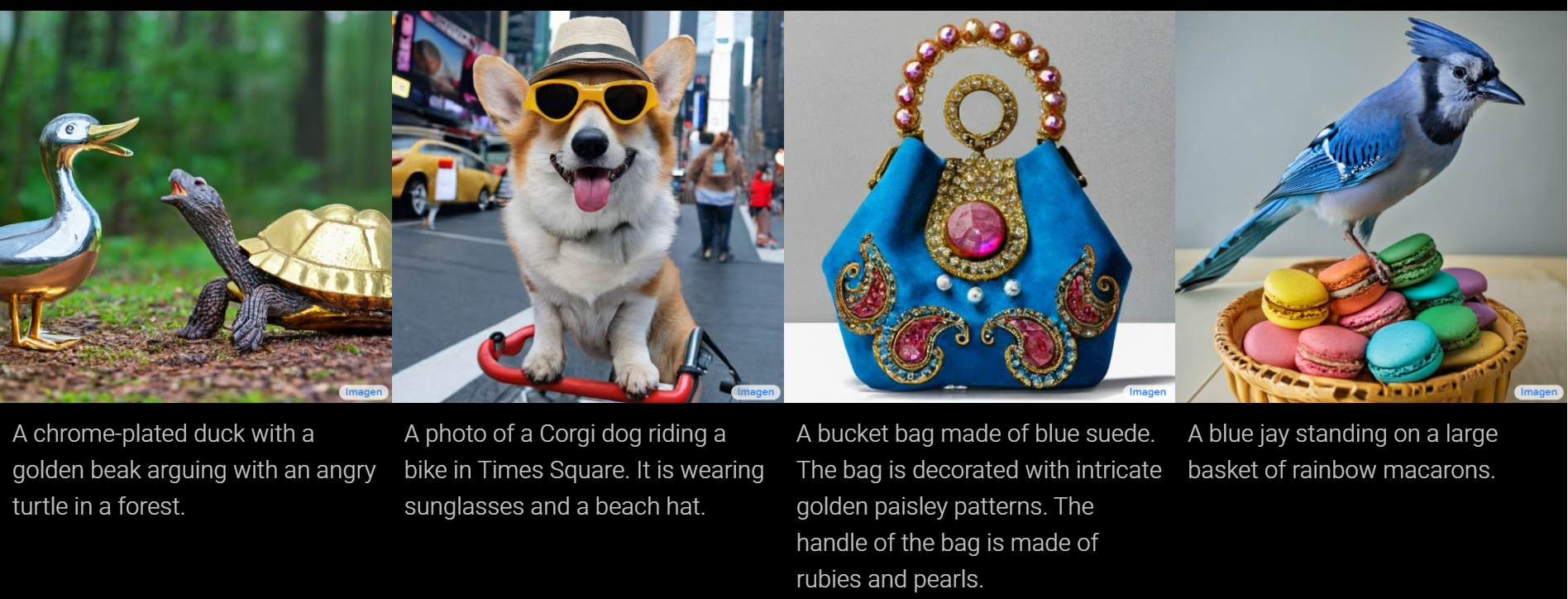

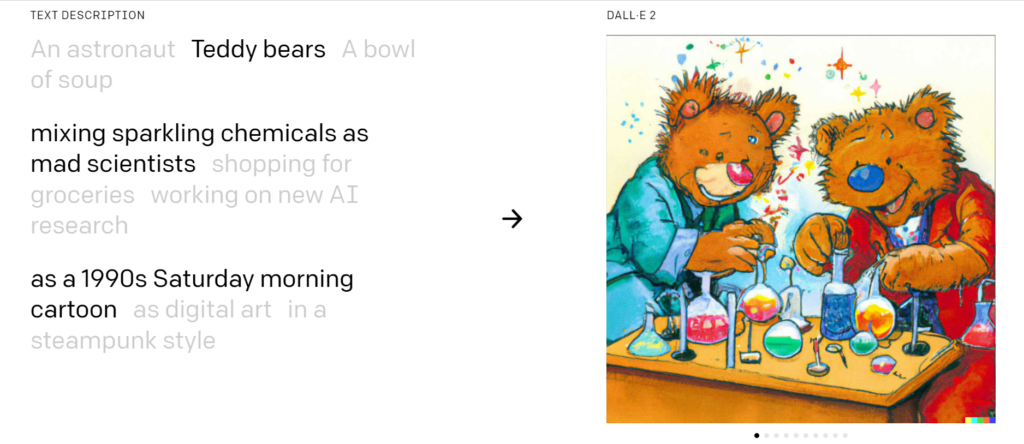

Back in 2021, the AI research development company OpenAI, created a program known as ‘Dall-E’ – a mash-up of the names Salvador Dali and Wall-E. Dall-E 2 is capable of taking a word and creating a completely unique AI-generated image. This software is not limited to creating an image in a single style, but can add different artistic techniques to your application, introducing drawing styles, oil painting, Monet style, a plasticine model, wool weaving, drawn on a cave wall or even as a 1960s movie poster. This is one of the examples shown on the official Dall-E 2 website.

Font: Dall-E 2.

But the search engine giant was not going to be satisfied and has been introducing great improvements in the software, creating Image, a new and powerful iteration that performs at a much higher level. Along with some other new features, the key difference in this second model is a huge improvement in image resolution, a decrease in the time it takes for the image to be created and a more intelligent algorithm to create the images.

As the company’s head of AI explained, systems powered by this type of AI can unlock a new world of digital creativity from computers and human intervention. And Google Image manages to do so seamlessly.

Similarly, Jeff Dean mentioned how the project is exactly what Google envisioned it would be and it’s all thanks to the company’s Research division which, after much trial and error, came up with the breakthrough of spreading text with images to add a new kind of realism. To better understand what users can expect, the company mentioned how Imagen extracts its brilliance thanks to the power of giant-scale transformational language devices that understand text and merge it with image production.

The main discovery at the end of it all relates to how incredibly the model can encode text and produce relative images accordingly. And by increasing the size of the Image language model, better image-text alignment is achieved compared to simply making the image diffusion model larger.

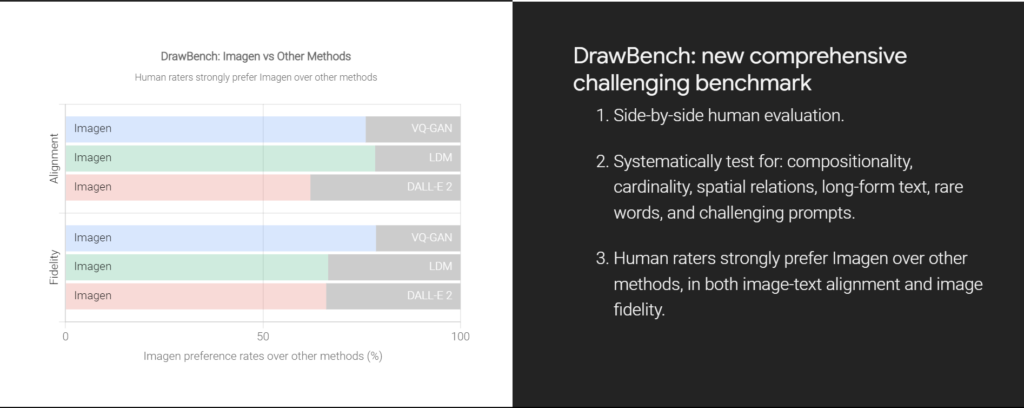

But, as the saying goes, seeing is believing, and to better understand how it all works, the company recently developed its DrawBench, which is the name reserved for a benchmark that could better evaluate text-image models. Through this method, Google hoped to show the world what its new breakthrough was capable of.

Font: Image by Google.

And that’s when the company revealed how human designers became big fans of Image, preferring it over other similar design models when making simultaneous comparisons. These took into account both the alignment of the image and text and the quality of the sample used. And among the common models used for evaluation were also DALL-E 2, VQ-GAN and Latent Diffusion.

Similarly, Google talked about how the metrics were also doing a great job of demonstrating the great capabilities of Image and the good job it does at understanding a user’s request. This includes understanding rarely used terms, long-form text and even unique spatial relationships.

Meanwhile, another major breakthrough the company is talking about relates to the U-net architectural front end, which is more efficient in terms of computational details while boasting greater memory capacity, not to mention faster convergence speed.

At the moment, Google has not released any specific code or publicly demonstrated its Image, as it plans to do so soon, when the time comes. However, Jeff Bezos did share a few examples on his own Twitter account with the intention of setting all his followers’ teeth on edge.